Who Checks the Magic Square?

Identifying Systemic Bottlenecks to Science

This is my submission to the Astera Essay Competition on identifying systemic bottlenecks to science. I point out what I consider the single biggest obstacle for the social sciences: verifying quality. I offer three solutions and no optimism.

Melencolia I depicts a winged personification of Melancholy surrounded by the tools of geometry, seated in paralysis. They can measure the world but not overcome it.

Let me start with two stylized facts: first, tools of artificial intelligence (AI) are here to stay. They already changed science. Second, science, and in particular social science, has, and always had, an incentive problem because research quality is increasingly cheap to imitate but expensive to verify.

A magical solution: the reviewer ex machina

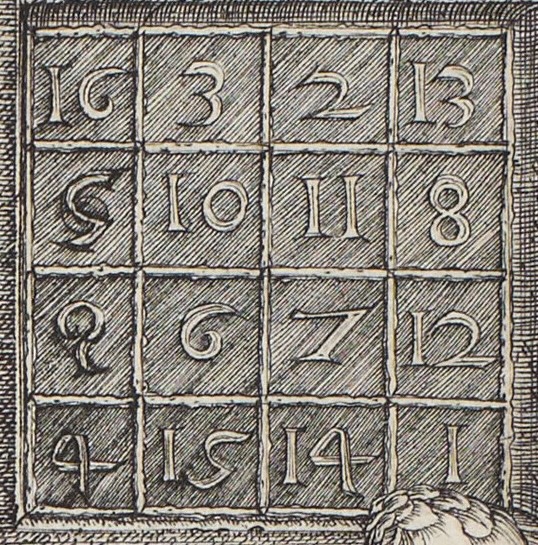

In Melencolia I, a four-by-four magic square sits high on the wall. Every row, column, and diagonal sums to 34. The bottom row encodes 1514, the year of the print. A computer can produce millions of these squares in milliseconds, but who will check if they are indeed magic squares?

This brings me to my first solution: the reviewer ex machina. “Obviously,” I hear you say, “the solution is an AI-assisted review process.”

Perhaps there is a real case for this. AI systems can already check mathematical proofs, flag inconsistencies, inspect code, and attempt autonomous replications. Recent work on agentic reproduction of social-science results1 is exactly the sort of advance that makes this tempting: let the machine read the paper and produce an evaluation. But this solves only the easiest part of verification. It can tell us whether the rows add to 34. It cannot yet tell us whether 34 was the right thing to measure, whether we care about 34, whether the identifying assumption is credible, or whether the answer was convenient or innovative. Crucially, judgment itself does not scale, because someone, a person, still has to spend time to verify the scientific quality.

A tried solution: show all your work

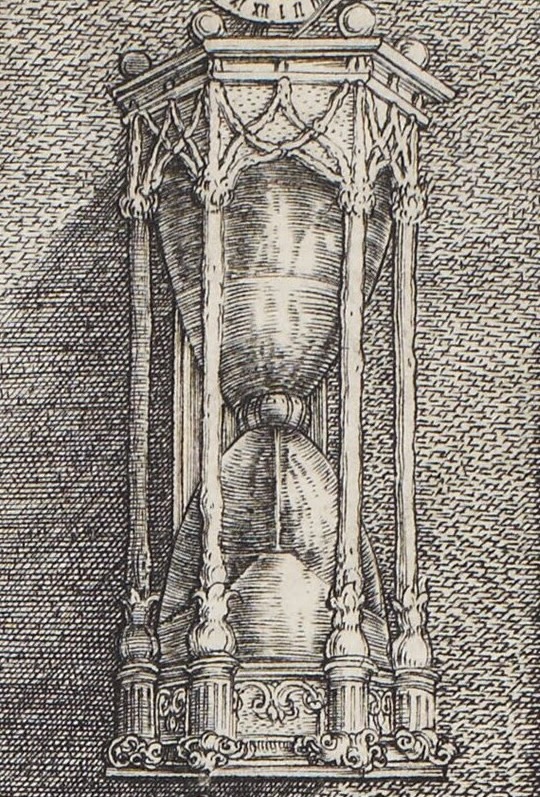

Dürer filled the engraving with an epistemic apparatus in the form of tools. We see keys and mathematical instruments. There is an hourglass. Melancholy is holding, dejectedly, a pair of dividers. All of these tools, in my interpretation, suggest that knowledge and transparency will fix the problem.

This brings me to my second solution: more disclosure. We in finance and accounting love disclosure.

Disclosing information about how the research was produced is the open-science answer: public data, public code, preregistration, registered reports, data-availability statements, AI-use statements, and perhaps soon LLM-readable papers.2 I am in favor of all of this. However: In 2013-2016 I worked at the Open Data Institute3 in London, during what I now think of as the open-data bubble. McKinsey had estimated that open data could unlock more than $3 trillion in annual value.4 I used to think that better data standards was the work. The problem, in retrospect, was that the commercial incentives were never there to fully realize the vision, and I believe the incentives in science are also not there to fully move to an open and reproducible state of research production.

In conclusion

The systemic bottleneck in the social sciences is clear and, I would argue, known: verifying quality. My answer to what to test is probably the most scientific one: it depends, and yes, some version of all of the above. I expect little voluntary social change, and disclosure alone has already shown its limits. So if I had to bet on one practical experiment, I would test a fair, automated, and integrated (!) version of the reviewer ex machina. The machine, however, should not pretend to certify truth. It should produce a public record of what was checked, what failed, what was repaired, and what still requires human judgment.